Research Lead

Led a team of developers to create an email auto-response platform that is used by government workers to reply to mass emails.

React Node ExpressResearch Assistant

Implemented a program that uses predictive modeling techniques to forecast public sentiment and attitudes toward the Ukraine war.

Python NumPy PandasSeas The Throne

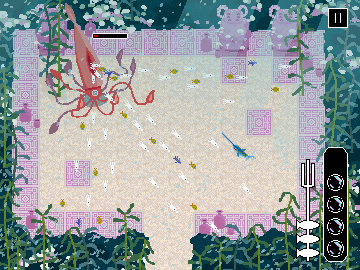

Worked with a team of 8 developers to create a dungeon-crawler style game. This games has multiple boss fights, levels, and easter eggs.

Java LibGDX AgileCS @ Cornell - Looking for Internship

I am a Junior Computer Science major and Information Science minor interested in data science related research.

I have experience working on large-scale projects in Java, such as the one I worked on with 8 other developers for CS 3152: Game Development. In this project I designed a large inheritance tree to model the game objects (containing over 30 classes), which we structured the game around.

I also have experience with conducting unit tests and integration tests, which we used during the development of this project. Working on this team project has taught me a lot about debugging and writing effective documentation for other developers.

Finally, I have a passion for creating solutions to interesting problems and for learning more about upcoming technologies and frameworks.

About Me

In my free time I enjoy climbing and going to the gym. I am also part of the cybersecurity club. Sometimes I work on my own software-related projects in my free time. Some of them are listed here:

- Web Scraping Research: Github

- Spotify Clone: Github

- Drone sentiment analysis: Github

- N-body simulation: Github

- AWS Coding Challenge: Github

Most of my recent projects can be found on Github where my progress can be tracked. All my projects have public repositories, so the code is free to view or copy.